Today, most generative AI systems try to solve everything with a single "brain." One model, one prompt, one answer.

But that's not how the most efficient systems in nature work. Some of the most complex decisions—like where an entire colony should live—are made without any central intelligence at all. This is the case with bees and their ability to reach consensus and resilience through collective intelligence: a concept that remains largely underexplored in modern AI systems.

This post aims to explain, in simple terms, how these ideas inspired us at fjord to design AI systems that actually work outside of a demo.

What problem are we trying to solve?

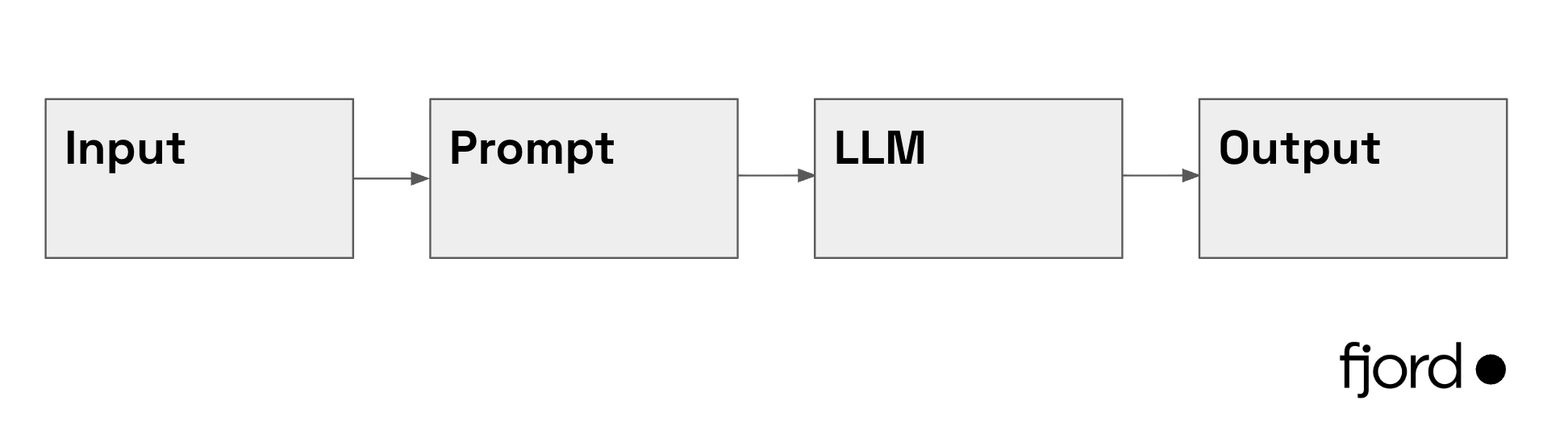

Most AI systems today are built like:

An input gets inserted into a prompt, sent to an LLM (ChatGPT, Claude, etc.), and we hope for a useful answer.

This works well for many simple tasks but starts to break down when problems become complex, ambiguous, or multivariate.

Placing the entire responsibility for success on a single operation is fragile. Under pressure, the system will end up hallucinating, getting confused, or even producing false positives.

We see it all the time:

- A chatbot that works perfectly in a demo and fails in production.

- An extractor that gets it right 80% of the time, and nobody can explain the other 20%.

- A system that doesn't scale because it depends on a single prompt that keeps getting longer.

Why does this happen?

LLMs, as amazing as they are, are not omnipotent. We can't expect them to single-handedly solve problems that require multiple steps, multiple sources of information, and multiple validations.

Designing fundamentally centralized systems goes against what our bee friends have taught us. Maybe it's time to go back to the drawing board.

How do bees solve complex decisions?

When a bee colony needs a new home, the decision is critical. A poor choice can mean the death of the entire colony. The process goes like this:

- Scout bees explore different sites independently.

- Each scout returns and signals with an intensity that reflects the quality of the site.

- Other bees visit and validate those signals—they don't trust blindly.

- Scouts promoting inferior sites receive stop signals from rivals, actively suppressing the weaker options.

- Over time, the best options accumulate support while the worst are filtered out.

- The colony converges on a decision.

No central authority. No reliance on a single actor. Just contributions and a continuous process of iteration. Each bee has a simple, well-defined task. But together, they form a powerful and effective collective intelligence system. The key isn't biology—it's the structure: specialized tasks, distributed validation, and convergence.

What can we learn from them?

Instead of designing AI systems where all the responsibility falls on a single node, we can design distributed systems with multiple interconnected operations (a.k.a. a Directed Acyclic Graph).

A concrete example

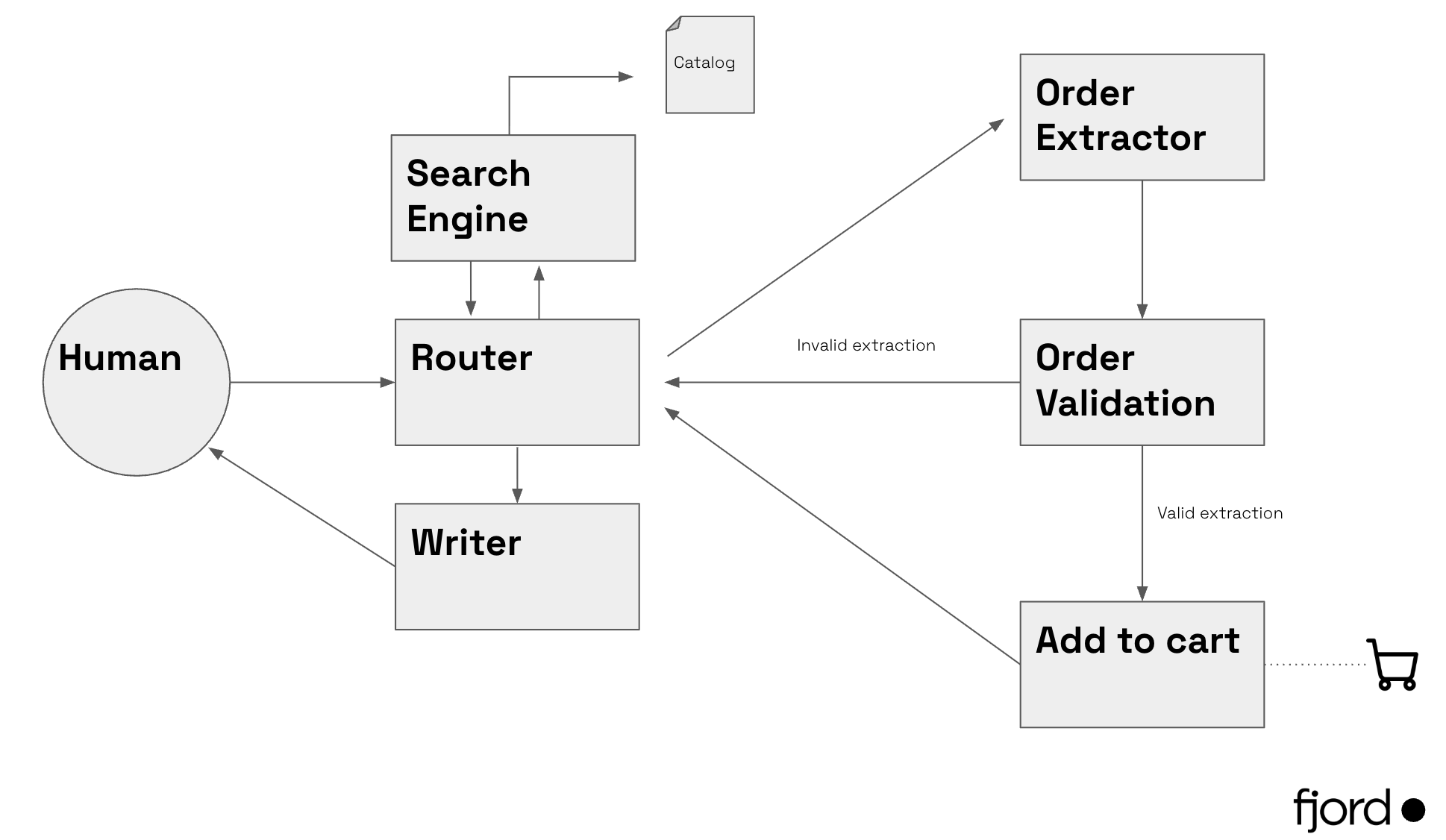

Imagine a sales chatbot that talks to customers and has access to the product catalog and other resources. A simplified graph might look like this:

Each node has a specific, bounded role:

- Router → Oversees the process and selects the next step.

- Catalog Search → Searches the catalog for related items.

- Writer → Drafts messages for the user (follow-up questions, confirmations, etc.).

- Order Extractor → Extracts the SKU and quantity the customer wants.

- Order Validation → Validates that the extraction is correct.

- Add to Cart → If the extraction is valid, adds it to the cart.

Instead of betting everything on a single answer, we get a continuous process of discovery and construction.

What it takes in practice

These systems don't emerge on their own. They require deliberate design, structure, and engineering:

- Clear roles and boundaries for each agent or model. If a node tries to do too much, we're back to the original problem.

- The right LLM for each task. Not everything needs GPT-4 or Claude Opus. Smaller, specialized models are often faster, cheaper, and more predictable for scoped tasks.

- Defined communication protocols. Nodes need to speak the same language—inputs and outputs as structured data, not free-form text.

- Evaluation mechanisms at every step. Without per-node metrics, you can't tell where the system is failing. Observability is not optional.

- Structured data as outputs. JSON, not prose. This lets nodes connect without ambiguity.

This approach has a higher upfront cost than a long prompt. But it's the difference between an impressive prototype and a system that works in production with real data.

Conclusion

Nature has spent millions of years solving coordination problems at scale. Bees, ants, and entire ecosystems don't rely on centralized intelligence. They rely on interaction, consensus, and coordination.

AI systems should follow the same path.

At fjord, we believe in well-designed systems—distributed systems for companies that are past the "let's try a chatbot" phase and need something that actually works. If your process today depends on a single model doing too much, we can help you scale it.